How to create thought leadership surveys that get results

James Watson

Are you spending a lot of time and money on your thought leadership surveys, only to be disappointed by the findings? There are three things you might be doing wrong.

Survey data underpins a lot of today’s corporate thought leadership – and for good reason. For many popular topics, such as AI adoption or what the post-pandemic workplace might look like, there is often little or no existing data to draw on – and if there is, it is often backward looking. And while expert interviews can give great individual examples of what companies are doing, they will not confirm whether something is a trend.

But marketing teams often struggle to commission thought leadership surveys effectively. There is a tendency to ask too many questions, overcomplicate the target audience, and over-estimate how many responses they need.

The result? Far too much budget is wasted on the research input, and the study’s findings often end up undermined by the initial lack of focus.

Three steps to better thought leadership surveys

1. Consider a shorter, tighter questionnaire

One of the most common problems we see is companies trying to squeeze everything possible into a survey questionnaire, until it becomes a long, labyrinthine set of questions.

This adds both cost and complexity and rarely leads to a good outcome. The eventual content rarely makes use of all the questions, which means that the rest of the results are left on the cutting room floor.

The dangers of ‘spray and pray’

A long set of questions suggests a lack of clarity within the team about what the study should explore. In the absence of clarity, many companies default to ‘spray and pray’: let’s ask everything we can think of, and hopefully some of it will be worth reporting.

This has three consequences:

- It sharply increases the time and team input necessary to get the study agreed

- It raises the risk of less interesting data, because fatigued respondents run out of steam

- It makes the final analysis much more difficult.

What do you really want to know?

Shorter thought leadership surveys encourage teams to focus on what they really want to investigate. Taken to the extreme, some excellent examples consist of just one question.

Take the World Happiness Report. It gets extensive coverage globally, but is based on a single question that asks consumers to envisage how happy they are, on a scale of one to 10. Another example is ManpowerGroup’s long-running survey of hiring trends. This asks hiring managers a single question about their hiring intentions for the quarter ahead, and is a good barometer of the health of the labour market.

For respondents, shorter surveys improve engagement and focus their thinking. And this improves the quality of the responses.

You don’t need to try and compress everything down to a single question, but it is worth asking whether you really need 20 questions (or more). Could five or 10 give you the same outcome? A tighter focus will save you a lot of time and cost, and can often improve the quality of your outcome.

2. Think about a simpler target sample

A second common problem is excessively precise and complicated audience specifications. For example, setting very precise quotas for specific segments of the population (or, worse, interlocking quotas) on all kinds of respondent criteria – from job function and seniority to company size and sub-sector.

There are several trade-offs here.

Complexity trade-offs

The first is financial: the narrower and more specific the target sample, the more costly it will be. Our analysis suggests that companies spend anything up to six times more for a precise specification than for an equivalent audience without the tough quotas and niche role requirements. For example, a survey of 300 senior IT leaders globally can easily be two to four times cheaper than a survey of 300 CIOs from precise quotas on industries, countries and company size. Is the resulting data really two to four times more valuable?

The second trade-off is time. More complex B2B specifications can add anything from two to 12 weeks in the field – on top of the typical four-week fieldwork timings. This pushes back your launch planning, and extends the overall time you invest in the project. It also increases the risk of something unexpected happening during the fieldwork phase – a pandemic, say, or a stock market crash – which affects the validity of your data.

Finally, there is the added pressure that comes with a bigger overall investment. Will your stakeholders really value a more targeted sample? More than additional content, for instance, or flashier design?

3. Are more respondents really necessary?

What size sample do you need for good statistical significance? A larger sample size reduces the margin of error on a study, but you get diminishing returns after you reach a certain number of responses.

Bigger is not always better

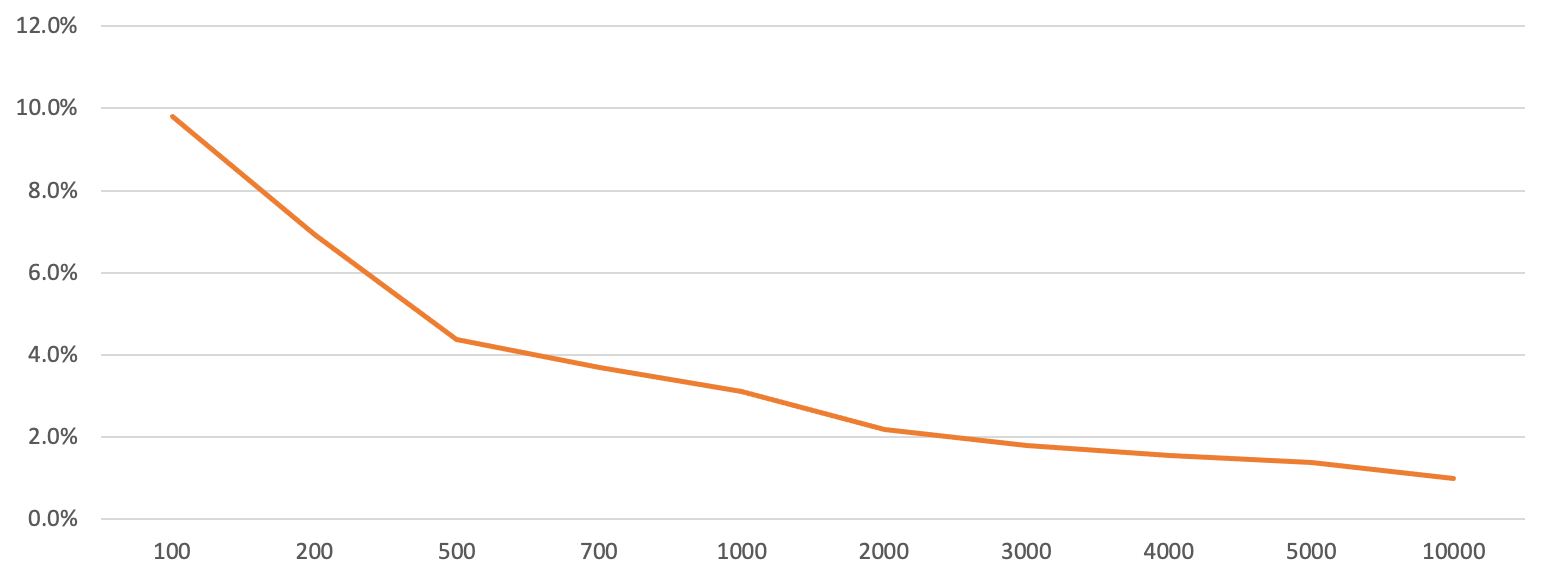

The chart below shows that getting up to 500 respondents sharply improves the margin of error – from about 10% to about 4%. This suggests a strong reduction in the risk of anomalous responses that will distort your findings.

However, increasing the sample size from 500 to 1,000 only modestly nudges the margin of error – down to about 3% – although you have doubled your investment. Further increases in sample size after 1,000 also give you essentially no marked improvement in the margin of error.

SOURCE: McGill, percentage margin of error by sample size, 95% confidence interval (CI)

Context makes a difference

You also need to consider the different contexts of consumer polls and business surveys. If you are going to ask citizens how they plan to vote, for instance, then a large sample will give you a more realistic snapshot of what is often a tightly contested outcome.

But for B2B samples, the total potential universe of respondents may be very small. Imagine a theoretical survey of FTSE 100 CEOs about their views on Brexit, for example. This would by definition be limited to a maximum of 100 respondents, and getting even 30 responses would be newsworthy and valid.

So whether you are seeking to survey CFOs at large global companies or risk managers at banks, remember that the total universe is smaller. So a small sample can still be valid and statistically significant. It is always worth taking a step back to ask yourself whether the investment in a larger overall sample is truly necessary and a good use of your budget.

Speak to the team

We’ll help you to navigate and overcome any challenges you currently face and learn how to get more out of your content.

Book a meeting

About the author: James Watson

James is our chief operating officer and co-founder. He oversees project delivery and all the processes involved. In this role, he helps manage and coordinate the research, editorial and project management teams in order to deliver high quality thought leadership campaigns.

As an editor, he has over 15 years of experience in both business journalism and research. Some of his specialist areas of coverage include IT and technology, urban issues, energy and sustainability. Prior to co-founding FT Longitude, James spent five years at the Economist Group in the UK. Earlier, he worked in both South Africa and Singapore, reporting on the dot com boom (and bust). He is now based in Zurich, Switzerland.

|

Tel:

+44 (0)20 7873 4770

|

Tel:

+44 (0)20 7873 4770