Why thought leadership brands must be foxes, not hedgehogs

Rob Mitchell

Why thought leadership brands must be foxes, not hedgehogs

In his book The Signal And The Noise, data journalism pioneer Nate Silver draws a distinction between two types of thinkers. ‘Hedgehogs’ are those who view the world through the lens of a single idea, whereas ‘foxes’ are those who draw on a wide variety of different experiences.

For Silver, newspaper opinion columns – which he hates – are the work of hedgehogs. “They pull threads together from very weak evidence and draw grand conclusions from them,” he explained in New York magazine.

Foxes, by contrast, take a pluralistic approach, drawing evidence from multiple sources and applying critical thinking to how these datasets should be used. For Silver, foxes exemplify the relatively new style of ‘data journalism’, which is the model that sits at the heart of his news organisation FiveThirtyEight. It is no coincidence that the logo on the website is – yes, you guessed it – a fox.

Data journalism and thought leadership

The world of data journalism has close parallels with the rise of thought leadership. And here at FT Longitude we believe that producers of thought leadership also need to think like foxes. ![]()

We need to produce content that is based on evidence drawn from multiple sources – not just opinion. Evidence-based thought leadership has credibility; content based on opinion alone, especially when published under a multinational’s own brand, will be viewed with suspicion.

This is why research tools such as surveys are so widely used. By gathering evidence from a large number of third-party respondents, surveys help to elevate thought leadership above mere opinion, providing a credible foundation for a brand’s key messages.

Yet while surveys – and other quantitative research inputs – are hugely valuable, they need to be used carefully. Just because something is founded on data doesn’t mean it’s correct – as the data visualisation expert Alberto Cairo notes in a fascinating essay, we can ‘prove’ just about anything with data. He cites the example of an article on Vox (another data journalism proponent) which suggests that wearing a helmet while riding a bike may be bad for you. This is based on data, yet Cairo argues that the authors have carelessly cherry-picked studies and connected them to support an idea that has little basis in fact.

Save the planet: bring back pirates…?

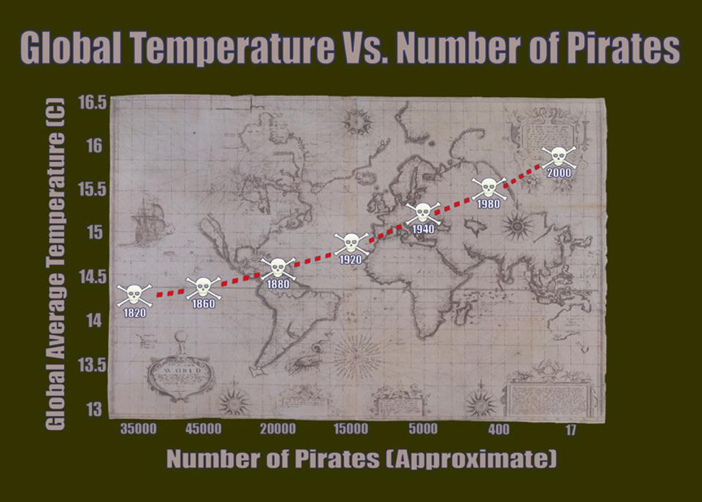

Confusion between correlation and causation is a common underlying problem with data analysis. To understand what we mean by this, take a look at the graph below. It shows that, as the number of pirates in the world has fallen over the past century or so, global warming has got steadily worse. So, according to this data, the fact that there are fewer pirates on the high seas these days is a key cause of global warming.

It’s a flippant example, but an important point. If we are going to base thought leadership on research inputs like surveys and other data, we need to be very careful about the conclusions we draw from them. Confusion between correlation and causation, ambiguous or leading survey questions, and selection bias can all cause major problems, leading to muddled thinking and conclusions that are based on incorrect assumptions.

This matters. Last year, FT Longitude conducted a survey of thought leadership producers and consumers. One of our key findings was that 76% of executives say that thought leadership content can help them make better decisions. Well, yes – but only if the research underpinning that content has been properly designed and analysed. If it hasn’t, then the decisions they take will probably be the wrong ones.

Pluralism and precision

So we need to be foxes, not hedgehogs, but this distinction on its own is not enough. Good thought leadership, like good data journalism, relies on critical thinking and robust research and analysis. It’s important to be pluralistic in how we use data, but this needs to be complemented with plenty of rigour – and scepticism.

So if you come across any foxes with a good grounding in statistics, send them our way. They’re exactly the kind of characters we like to recruit.

Speak to the team

We’ll help you to navigate and overcome any challenges you currently face and learn how to get more out of your content.

Book a meeting

About the author: Rob Mitchell

Rob is our CEO and co-founder and leads FT Longitude’s strategic planning and sets the overall vision and priorities for the business. He manages the board-level relationship with FT Longitude’s parent company, the Financial Times group, and also oversees FT Longitude’s finances, people management and administration.

Prior to co-founding FT Longitude in 2011, Rob was an independent writer and editor. Between 2007 and 2010, he was a managing editor at the Economist Intelligence Unit and prior to that he was an editor at the Financial Times, where he was responsible for the newspaper’s sponsored reports, including the Mastering Management series.

|

Tel:

+44 (0)20 7873 4770

|

Tel:

+44 (0)20 7873 4770